It is obvious that users should trust the data that are managed by software applications constituting the Information Systems (IS). This means that organizations should ensure an appropriate level of quality of the data they manage in their IS. Therefore, the requirement for the adequate level of quality of data to be managed by IS must be an essential requirement for every organization. Many advances have been done in recent years in software quality management both at the process and product level. This is also supported by the fact that a number of global standards have been developed and involved, addressing some specific issues, using quality models such as (ISO 25000, ISO 9126), those related to process maturity models (ISO 15504, CMMI), and standards focused mainly on software verification and validation (ISO 12207, IEEE 1028, etc.). These standards have been considered in worldwide for over 15 years.

However, awareness of software quality depends on other variables, such as the quality of information and data managed by application. This is recognized by SQUARE standards (ISO/IEC 25000), which highlight the need to deal with data quality as part of the assessment of the quality level of the software product, according to which “the target computer system also includes computer hardware, non-target software products, non-target data, and the target data, which is the subject of the data quality model”. This means that organizations should take into account data quality concerns when developing various software, as data is a key factor. To this end, we stress that such data quality concerns should be considered at the initial stages of software development, attending the “data quality by design” principle (with the reference to the “quality by design” considered relatively often with significantly more limited interest (if any) to “data quality” as a subset of the “quality” concept when referring to data / information artifacts).

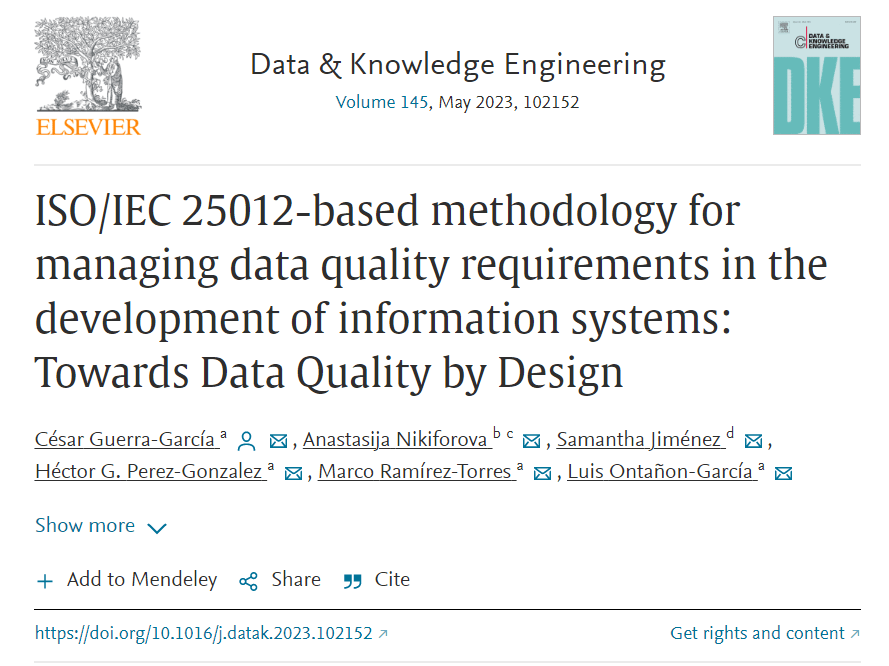

The “data quality” concept is considered to be multidimensional and largely context dependent. For this reason, the management of specific requirements is a difficult task. Thus, the main objective of our new paper titled “ISO/IEC 25012-based methodology for managing data quality requirements in the development of information systems: Towards data quality by design” is to present a methodology for Project Management of Data Quality Requirements Specification called DAQUAVORD aimed at eliciting DQ requirements arising from different users’ viewpoints. These specific requirements should serve as typical requirements, both functional and non-functional, at the time of the development of IS that takes Data Quality into account by default leading to smarter and collaborative development.

In a bit more detail, we introduce the concept of Data Quality Software Requirement as a method to implement a Data Quality Requirement in an application. Data Quality Software Requirement is described as a software requirement aimed at satisfying a Data Quality Requirement. The justification for this concept lies in the fact that we want to capture the Data Quality Software Requirements that best match the data used by a user in each usage scenario, and later, originate the consequent Data Quality Software Requirements that will complement the normal software requirements linked to each of those scenarios. Addressing multiple Data Quality Software Requirements is indisputably a complex process, taking into account the existence of strong dependencies such as internal constraints and interaction with external systems, and the diversity of users. As a result, they tend to impact and show the consequences of contradictory overlaps on both process and data models.

In terms of such complexity and attempting to improve the developing efforts, we introduce DAQUAVORD, a Methodology for Project Management of Data Quality Requirements Specification, which is based on the Viewpoint-Oriented Requirements Definition (VORD) method, and the latest and most generally accepted ISO/IEC 25012 standard. It is universal and easily adaptable to different information systems in terms of both their nature, number and variety of actors and other aspects. The paper proposes both the concept of the proposed methodology and an example of its application, which is a kind of manual step-by-step guidance on how to use it to achieve smarter software development with data quality by design. This paper is a continuation of our previous study. This paper establishes the following research questions (RQs):

RQ1: What is the state of the art regarding the “data quality by design” principle in the area of software development? What are (if any) current approaches to data quality management during the development of IS?

RQ2: How the concepts of the Data Quality Requirements (DQR) and the Viewpoint-Oriented Requirements Definition (VORD) method should be defined and implemented in order to promote the “data quality by design” principle?

Sounds interesting? Read the full-text of the article published in Elsevier Data & Knowledge Engineering – here.

The first comprehensive approach to this problematic is presented in this paper, setting out the methodology for project management of the specification for data quality requirements. Given the relative nature of the concept of “data quality” and active discussions on the universal view on the data quality dimensions, we have based our proposal on the latest and most generally accepted ISO/IEC 25012 standard, thus seeking to achieve a better integration of this methodology with existing documentation and systems or projects existing in the organization. We suppose that this methodology will help Information System developers to plan and execute a proper elicitation and specification of specific data quality requirements expressed by different roles (viewpoints) that interact with the application. This can be assumed as a guide that analysts can obey when writing a Requirements Specification Document supplemented with Data Quality management. The identification and classification of data quality requirements at the initial stage makes it easier to developers to be aware of the quality of data to be implemented for each function during all development process of the application.

As future work thinking, we plan to consider the advantages provided by the Model Driven Architecture (MDA), focusing mainly on its capabilities of both abstraction and modelling characteristics. It will be much easier to integrate our results into the development of “Data Quality aware Information Systems” (DQ-aware-IS) with other software development methodologies and tools. This, however, is expected to expand the scope of the developed methodology and consider various feature related to data quality, including the development of a conceptual measure of data value, i.e., intrinsic value, as proposed in.

UPDATE: In July 2023 it also became one of the most downloaded articles from Data & Knowledge Engineering (Elsevier) in the last 90 days – have not read it yet? take a look, it is waiting for your reading 😉

César Guerra-García, Anastasija Nikiforova, Samantha Jiménez, Héctor G. Perez-Gonzalez, Marco Ramírez-Torres, Luis Ontañon-García, ISO/IEC 25012-based methodology for managing data quality requirements in the development of information systems: Towards Data Quality by Design, Data & Knowledge Engineering, 2023, 102152, ISSN 0169-023X, https://doi.org/10.1016/j.datak.2023.102152