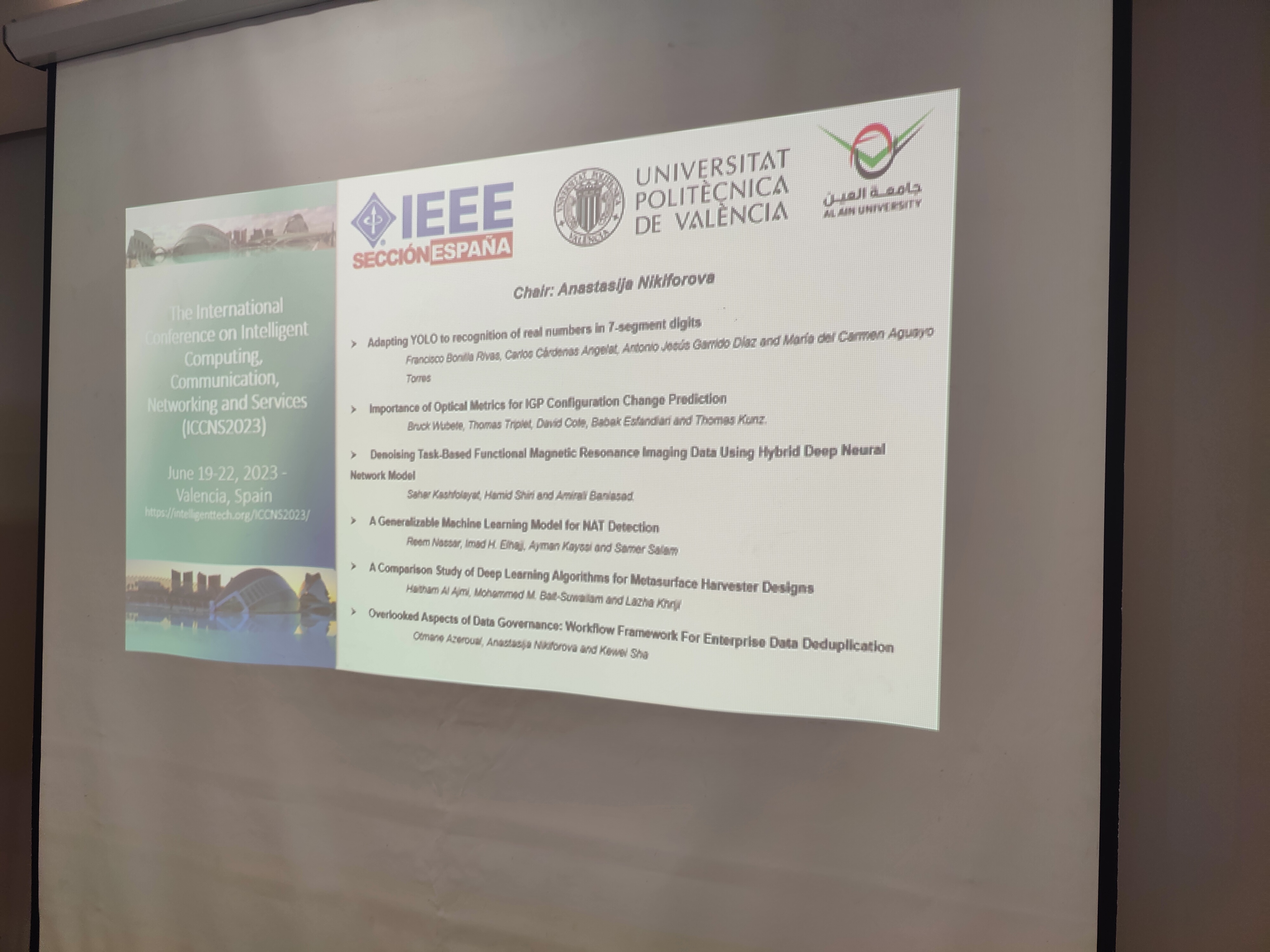

This time I would like to recommend for reading the new paper “Overlooked aspects of data governance: workflow framework for enterprise data deduplication” that has been just presented at the IEEE-sponsored International Conference on Intelligent Computing, Communication, Networking and Services (ICCNS2023). This “just”, btw, means June 19 – the day after my birthday, i.e. so I decided to start my new year with one more conference and paper & yes, this means that again, as many of those who congratulated me were wishing – to find the time for myself, reach work-life balance etc., is still something I have to try to achieve, but this time, I decided to give a preference to the career over my personal life (what a surprise, isn’t it?) 🙂 Moreover, this is the conference, where I am also considered to be part of Steering committee, Technical Program committee, as well as publicity chair. During the conference, I also acted as a session chair of its first session, what I consider to be a special honor – for me the session was very smooth, interactive and insightful, of course, beforehand its participants & authors and their studies, which allowed us to establish this fruitful discussion and get some insights for our further studies (yes, I also got one beforehand one very useful idea for further investigation). Thank you all contributors, with special thanks to Francisco Bonilla Rivas, Bruck Wubete, Reem Nassar, Haitham Al Ajmi.

And I am also proud with getting one of four keynotes for this conference – prof. Eirini Ntoutsi from the Bundeswehr University Munich (UniBw-M), Germany, who delivered a keynote “Bias and Discrimination in AI Systems: From Single-Identity Dimensions to Multi-Discrimination“, which I heard during one of previous conferences I attended and decided that it is “must” for our conference as well – super glad that Eirini accepted our invitation! Here, I will immediately mention that other keynotes were excellent as well – Giancarlo Fortino (University of Calabria, Italy), Dofe Jaya (Computer Engineering Department, California State University, Fullerton, California, USA), Sandra Sendra (Polytechnic University of Valencia, Spain).

The paper I presented is authored in a team of three – Otmane Azeroual, German Centre for Higher Education Research and Science Studies (DZHW), Germany, myself – Anastasija Nikiforova, Faculty of Science and Technology, Institute of Computer Science, University of Tartu, Estonia & Task Force “FAIR Metrics and Data Quality”, European Open Science Cloud & Kewei Sha, College of Science and Engineering University of Houston Clear Lake, USA – very international team. So, what is the paper about? It is (or should be) clear that data quality in companies is decisive and critical to the benefits their products and services can provide. However, in heterogeneous IT infrastructures where, e.g., different applications for Enterprise Resource Planning (ERP), Customer Relationship Management (CRM), product management, manufacturing, and marketing are used, duplicates, e.g., multiple entries for the same customer or product in a database or information system, occur. There can be several reasons for this (incl. but not limited due to the growing volume of data, incl. due to the adoption of cloud technologies, use of multiple different sources, the proliferation of connected personal and work devices in homes, stores, offices and supply chains), but the result of non-unique or duplicate records is a degraded data quality, which, in turn, ultimately leads to inaccurate analysis, poor, distorted or skewed decisions, distorted insights provided by Business Intelligence (BI) or machine learning (ML) algorithms, models, forecasts, and simulations, where the data form the input, and other data-driven activities such as service personalisation in terms of both their accuracy, trustworthiness and reliability, user acceptance / adoption and satisfaction, customer service, risk management, crisis management, as well as resource management (time, human, and fiscal), not to say about wasted resources, and employees, who are less likely trust the data and associated applications thereby affecting the company image. This, in turn, can lead to a failure of a project if not a business. At the same time, the amount of data that companies collect is growing exponentially, i.e., the volume of data is constantly increasing, making it difficult to effectively manage them. Thus, both ex-ante and ex-post deduplication mechanisms are critical in this context to ensure sufficient data quality and are usually integrated into a broader data governance approach. In this paper, we develop such a conceptual data governance framework for effective and efficient management of duplicate data, and improvement of data accuracy and consistency in medium to large data ecosystems. We present methods and recommendations for companies to deal with duplicate data in a meaningful way, while the presented framework is integrated into one of the most popular data quality tools – Data Cleaner.

In short, in this paper we:

- first, present methods for how companies can deal meaningfully with duplicate data. Initially, we focus on data profiling using several analysis methods applicable to different types of datasets, incl. analysis of different types of errors, structuring, harmonizing, & merging of duplicate data;

- second, we propose methods for reducing the number of comparisons and matching attribute values based on similarity (in medium to large databases). The focus is on easy integration and duplicate detection configuration so that the solution can be easily adapted to different users in companies without domain knowledge. These methods are domain-independent and can be transferred to other application contexts to evaluate the quality, structure, and content of duplicate / repetitive data;

- finally, we integrate the chosen methods into the framework of Hildebrandt et al. [ref 2]. We also explore some of the most common data quality tools in practice, into which we integrate this framework.

After that, we test and validate the framework. The final refined solution provides the basis for subsequent use. It consists of detecting and visualizing duplicates, presenting the identified redundancies to the user in a user-friendly manner to enable and facilitate their further elimination.

With this paper we aim to support research in data management and data governance by identifying duplicate data at the enterprise level and meeting today’s demands for increased connectivity / interconnectedness, data ubiquity, and multi-data sourcing. In addition, the proposed conceptual data governance framework aims to provide an overview of data quality, accuracy and consistency to help practitioners approach data governance in a structured manner.

In general, not only technological solutions are needed that would identify / detect poor quality data and allow their examination and correction, or would ensure their prevention by integrating some controls into the system design, striving for “data quality by design” [ref3, ref4], but also cultural changes related to data management and governance within the organization. These two perspectives form the basis of the wealth business data ecosystem. Thus, the presented framework describes the hierarchy of people who are allowed to view and share data, rules for data collection, data privacy, data security standards, and channels through which data can be collected. Ultimately, this framework will help users be more consistent in data collection and data quality for reliable and accurate results of data-driven actions and activities.

Sounds interesting? Read the paper -> here (to be cited as: Azeroual, O., Nikiforova, A., Sha, K. (2023, June). Overlooked aspects of data governance: workflow framework for enterprise data deduplication. In 2023 International Conference on Intelligent Computing, Communication, Networking and Services (ICCNS2023). IEEE (in print))

International Conference on Intelligent Computing, Communication, Networking and Services (ICCNS2023) is collocated with The International Conference on Multimedia Computing, Networking and Applications (MCNA2023), which are sponsored by IEEE (IEEE Espana Seccion), Universitat Politecnica de Valencia, Al ain University. Great thanks to the organizers – Jaime Lloret, Universitat Politècnica de València, Spain & Yaser Jararweh, Jordan University of Science and Technology, Jordan & Marios C. Angelides, Brunel University London, UK & Muhannad Quwaider, Jordan University of Science and Technology, Jordan.

References:

Azeroual, O., Nikiforova, A., Sha, K. (2023, June). Overlooked aspects of data governance: workflow framework for enterprise data deduplication. In 2023 International Conference on Intelligent Computing, Communication, Networking and Services (ICCNS2023). IEEE (in print).

Hildebrandt, K., Panse, F., Wilcke, N., & Ritter, N. (2017). Large-scale data pollution with Apache Spark. IEEE Transactions on Big Data, 6(2), 396-411

Guerra-García, C., Nikiforova, A., Jiménez, S., Perez-Gonzalez, H. G., Ramírez-Torres, M., & Ontañon-García, L. (2023). ISO/IEC 25012-based methodology for managing data quality requirements in the development of information systems: Towards Data Quality by Design. Data & Knowledge Engineering, 145, 102152.

Corrales, D. C., Ledezma, A., & Corrales, J. C. (2016). A systematic review of data quality issues in knowledge discovery tasks. Revista Ingenierías Universidad de Medellín, 15(28), 125-150.